Claude 4.6's new effort parameter doesn't just set how hard the model thinks; it changes how thoroughly it acts. If you're swapping in Opus 4.6 with effort=low expecting it to behave like other providers' low-reasoning modes, you may be surprised.

tl;dr: Anthropic's new effort parameter for Claude 4.6 controls more than reasoning depth. Unlike thinking_level (Gemini) or reasoning.effort (OpenAI), which primarily set an upper bound on how much the model thinks, effort also governs how lazily the model behaves in general: how many tool calls it issues, how thoroughly it cross-references results, and whether it follows system prompt instructions about research methodology. We discovered this the hard way when Opus 4.6 at effort=low effectively ignored our system prompt during evals. Bumping to effort=medium fixed it.

But you might be in for a rough awakening if you're thinking of replacing your Gemini 3 Pro (thinking_level=low) with Opus 4.6 (effort=low). That's because the new effort parameter doesn't only control reasoning depth, but also how lazily the model behaves in general, i.e. the number of tool calls it issues, how thoroughly it tries to cross reference preliminary results it found, etc.

This can be quite surprising, especially if you are used to controlling this kind of behaviour in your system prompt, rather than via your choice of LLM. As a result we've observed some rather odd behaviour in our trace analysis, where Opus 4.6 (effort=low) seemingly ignored our system prompt instructing it to reason thoroughly for our DRB evals. (In this particular case, Opus 4.6 (effort=medium) seemed to do the trick as it was the lowest effort Opus 4.6 version that didn't ignore instructions on when and how to do web research.)

How did we get here?

The options to influence reasoning models' depth of thinking have been far from standardised across providers, but they've generally fallen into two categories. The first is setting an upper bound on the number of tokens generated in thinking blocks, usually via something like budget_tokens. The second is controlling reasoning depth through an effort-style parameter: reasoning.effort for OpenAI models, reasoning_effort via a beta header for some older Anthropic models, and thinking_level for Gemini models.

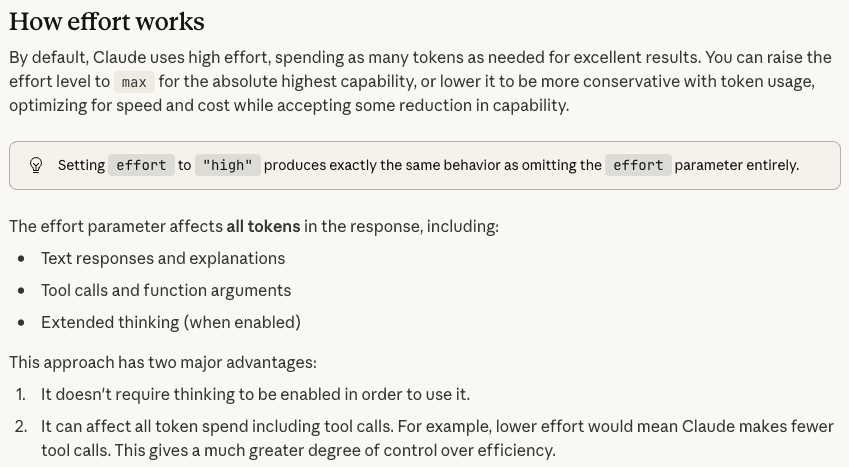

With Claude 4.6, Anthropic decided to introduce yet another parameter: effort, which treats the same values of low, medium, high, (max for Opus only) as existing "depth of reasoning" parameters.

Overall this looks like a win for plug and play solutions, but as someone who prefers to have a little more control over my agents, this feels a bit odd to me. What do you think?

(To be fair, Anthropic was about as transparent as possible about this in their docs and I take full blame for not reading their docs properly before kicking off our evals.)

Related

- LLMs Are Finally Good Enough to Analyse Their Own Traces

- Anthropic Is the Only Frontier Lab Where Reasoning Effort Scales on Deep Research Bench

- Higher effort settings in LLMs can reduce accuracy

- Deep Research Benchmark: Evaluating LLM Web Research Agents

FutureSearch lets you run your own team of AI researchers and forecasters on any dataset. Try it for yourself.